Adds support for appending to an existing Excel table.

# Important Notes

- Renamed `Column_Mapping` to `Column_Name_Mapping`

- Changed new type name to `Map_Column`

- Added last modified time and creation time to `File`.

Truffle is using [MultiTier compilation](190e0b2bb7) by default since 21.1.0. That mean every `RootNode` is compiled twice. However benchmarks only care about peak performance. Let's return back the previous behavior and compile only once after profiling in interpreter.

# Important Notes

This change does not influence the peak performance. Just the amount of IGV graphs produced from benchmarks when running:

```

enso$ sbt "project runtime" "withDebug --dumpGraphs benchOnly -- AtomBenchmark"

```

is cut to half.

`AtomBenchmarks` are broken since the introduction of [micro distribution](https://github.com/enso-org/enso/pull/3531). The micro distribution doesn't contain `Range` and as such one cannot use `1.up_to` method.

# Important Notes

I have rewritten enso code to manual `generator`. The results of the benchmark seem comparable. Executed as:

```

sbt:runtime> benchOnly AtomBenchmarks

```

A semi-manual s/this/self appied to the whole standard library.

Related to https://www.pivotaltracker.com/story/show/182328601

In the compiler promoted to use constants instead of hardcoded

`this`/`self` whenever possible.

# Important Notes

The PR **does not** require explicit `self` parameter declaration for methods as this part

of the design is still under consideration.

This PR merges existing variants of `LiteralNode` (`Integer`, `BigInteger`, `Decimal`, `Text`) into a single `LiteralNode`. It adds `PatchableLiteralNode` variant (with non `final` `value` field) and uses `Node.replace` to modify the AST to be patchable. With such change one can remove the `UnwindHelper` workaround as `IdExecutionInstrument` now sees _patched_ return values without any tricks.

This introduces a tiny alternative to our stdlib, that can be used for testing the interpreter. There are 2 main advantages of such a solution:

1. Performance: on my machine, `runtime-with-intstruments/test` drops from 146s to 65s, while `runtime/test` drops from 165s to 51s. >6 mins total becoming <2 mins total is awesome. This alone means I'll drink less coffee in these breaks and will be healthier.

2. Better separation of concepts – currently working on a feature that breaks _all_ enso code. The dependency of interpreter tests on the stdlib means I have no means of incremental testing – ALL of stdlib must compile. This is horrible, rendered my work impossible, and resulted in this PR.

New plan to [fix the `sbt` build](https://www.pivotaltracker.com/n/projects/2539304/stories/182209126) and its annoying:

```

log.error(

"Truffle Instrumentation is not up to date, " +

"which will lead to runtime errors\n" +

"Fixes have been applied to ensure consistent Instrumentation state, " +

"but compilation has to be triggered again.\n" +

"Please re-run the previous command.\n" +

"(If this for some reason fails, " +

s"please do a clean build of the $projectName project)"

)

```

When it is hard to fix `sbt` incremental compilation, let's restructure our project sources so that each `@TruffleInstrument` and `@TruffleLanguage` registration is in individual compilation unit. Each such unit is either going to be compiled or not going to be compiled as a batch - that will eliminate the `sbt` incremental compilation issues without addressing them in `sbt` itself.

fa2cf6a33ec4a5b2e3370e1b22c2b5f712286a75 is the first step - it introduces `IdExecutionService` and moves all the `IdExecutionInstrument` API up to that interface. The rest of the `runtime` project then depends only on `IdExecutionService`. Such refactoring allows us to move the `IdExecutionInstrument` out of `runtime` project into independent compilation unit.

Auto-generate all builtin methods for builtin `File` type from method signatures.

Similarly, for `ManagedResource` and `Warning`.

Additionally, support for specializations for overloaded and non-overloaded methods is added.

Coverage can be tracked by the number of hard-coded builtin classes that are now deleted.

## Important notes

Notice how `type File` now lacks `prim_file` field and we were able to get rid off all of those

propagating method calls without writing a single builtin node class.

Similarly `ManagedResource` and `Warning` are now builtins and `Prim_Warnings` stub is now gone.

Drop `Core` implementation (replacement for IR) as it (sadly) looks increasingly

unlikely this effort will be continued. Also, it heavily relies

on implicits which increases some compilation time (~1sec from `clean`)

Related to https://www.pivotaltracker.com/story/show/182359029

@radeusgd discovered that no formatting was being applied to std-bits projects.

This was caused by the fact that `enso` project didn't aggregate them. Compilation and

packaging still worked because one relied on the output of some tasks but

```

sbt> javafmtAll

```

didn't apply it to `std-bits`.

# Important Notes

Apart from `build.sbt` no manual changes were made.

This is the 2nd part of DSL improvements that allow us to generate a lot of

builtins-related boilerplate code.

- [x] generate multiple method nodes for methods/constructors with varargs

- [x] expanded processing to allow for @Builtin to be added to classes and

and generate @BuiltinType classes

- [x] generate code that wraps exceptions to panic via `wrapException`

annotation element (see @Builtin.WrapException`

Also rewrote @Builtin annotations to be more structured and introduced some nesting, such as

@Builtin.Method or @Builtin.WrapException.

This is part of incremental work and a follow up on https://github.com/enso-org/enso/pull/3444.

# Important Notes

Notice the number of boilerplate classes removed to see the impact.

For now only applied to `Array` but should be applicable to other types.

Promoted `with`, `take`, `finalize` to be methods of Managed_Resource

rather than static methods always taking `resource`, for consistency

reasons.

This required function dispatch boilerplate, similarly to `Ref`.

In future iterations we will address this boilerplate code.

Related to https://www.pivotaltracker.com/story/show/182212217

The change promotes static methods of `Ref`, `get` and `put`, to be

methods of `Ref` type.

The change also removes `Ref` module from the default namespace.

Had to mostly c&p functional dispatch for now, in order for the methods

to be found. Will auto-generate that code as part of builtins system.

Related to https://www.pivotaltracker.com/story/show/182138899

Before, when running Enso with `-ea`, some assertions were broken and the interpreter would not start.

This PR fixes two very minor bugs that were the cause of this - now we can successfully run Enso with `-ea`, to test that any assertions in Truffle or in our own libraries are indeed satisfied.

Additionally, this PR adds a setting to SBT that ensures that IntelliJ uses the right language level (Java 17) for our projects.

A low-hanging fruit where we can automate the generation of many

@BuiltinMethod nodes simply from the runtime's methods signatures.

This change introduces another annotation, @Builtin, to distinguish from

@BuiltinType and @BuiltinMethod processing. @Builtin processing will

always be the first stage of processing and its output will be fed to

the latter.

Note that the return type of Array.length() is changed from `int` to

`long` because we probably don't want to add a ton of specializations

for the former (see comparator nodes for details) and it is fine to cast

it in a small number of places.

Progress is visible in the number of deleted hardcoded classes.

This is an incremental step towards #181499077.

# Important Notes

This process does not attempt to cover all cases. Not yet, at least.

We only handle simple methods and constructors (see removed `Array` boilerplate methods).

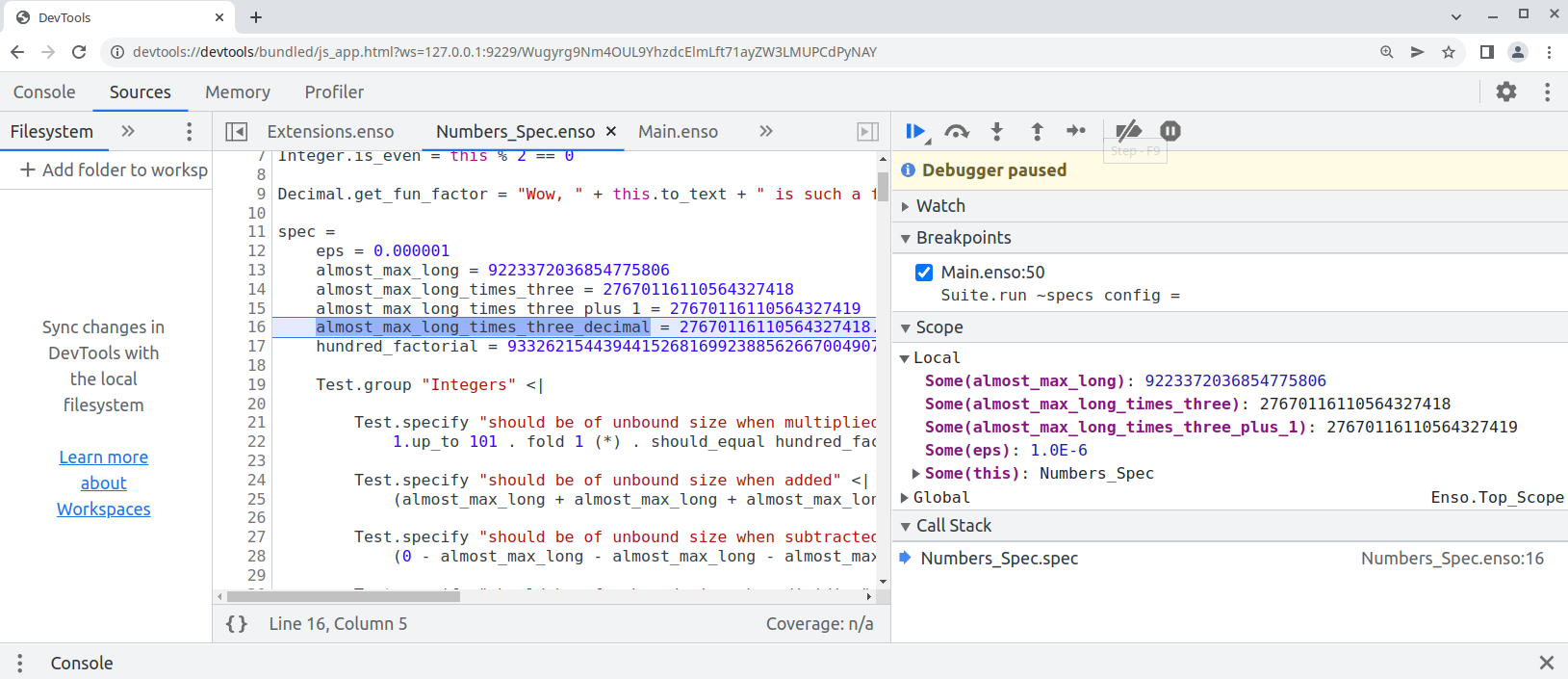

Finally this pull request proposes `--inspect` option to allow [debugging of `.enso`](e948f2535f/docs/debugger/README.md) in Chrome Developer Tools:

```bash

enso$ ./built-distribution/enso-engine-0.0.0-dev-linux-amd64/enso-0.0.0-dev/bin/enso --inspect --run ./test/Tests/src/Data/Numbers_Spec.enso

Debugger listening on ws://127.0.0.1:9229/Wugyrg9Nm4OUL9YhzdcElmLft71ayZW3LMUPCdPyNAY

For help, see: https://www.graalvm.org/tools/chrome-debugger

E.g. in Chrome open: devtools://devtools/bundled/js_app.html?ws=127.0.0.1:9229/Wugyrg9Nm4OUL9YhzdcElmLft71ayZW3LMUPCdPyNAY

```

copy the printed URL into chrome browser and you should see:

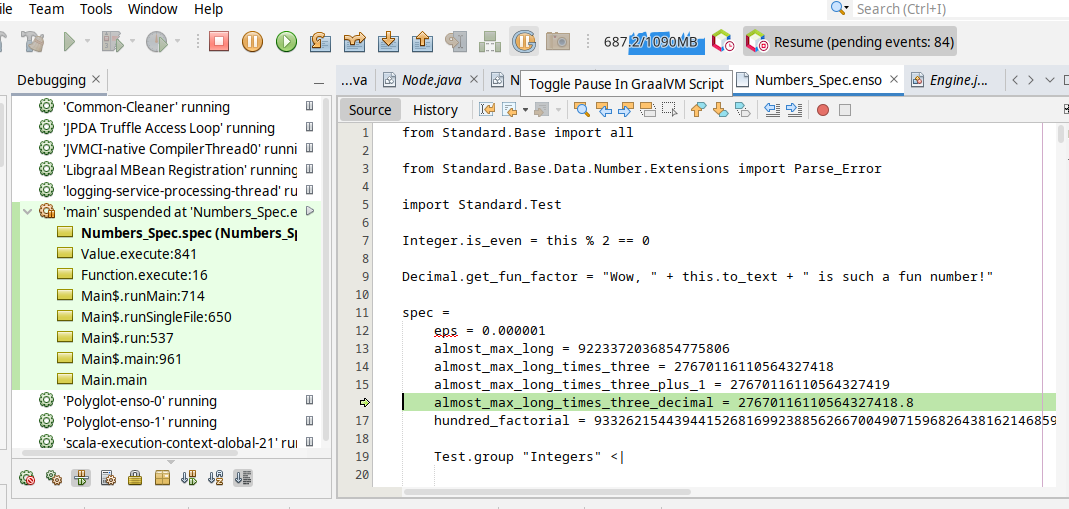

One can also debug the `.enso` files in NetBeans or [VS Code with Apache Language Server extension](https://cwiki.apache.org/confluence/display/NETBEANS/Apache+NetBeans+Extension+for+Visual+Studio+Code) just pass in special JVM arguments:

```bash

enso$ JAVA_OPTS=-agentlib:jdwp=transport=dt_socket,server=y,address=8000 ./built-distribution/enso-engine-0.0.0-dev-linux-amd64/enso-0.0.0-dev/bin/enso --run ./test/Tests/src/Data/Numbers_Spec.enso

Listening for transport dt_socket at address: 8000

```

and then _Debug/Attach Debugger_. Once connected choose the _Toggle Pause in GraalVM Script_ button in the toolbar (the "G" button):

and your execution shall stop on the next `.enso` line of code. This mode allows to debug both - the Enso code as well as Java code.

Originally started as an attempt to write test in Java:

* test written in Java

* support for JUnit in `build.sbt`

* compile Java with `-g` - so it can be debugged

* Implementation of `StatementNode` - only gets created when `materialize` request gets to `BlockNode`

This PR replaces hard-coded `@Builtin_Method` and `@Builtin_Type` nodes in Builtins with an automated solution

that a) collects metadata from such annotations b) generates `BuiltinTypes` c) registers builtin methods with corresponding

constructors.

The main differences are:

1) The owner of the builtin method does not necessarily have to be a builtin type

2) You can now mix regular methods and builtin ones in stdlib

3) No need to keep track of builtin methods and types in various places and register them by hand (a source of many typos or omissions as it found during the process of this PR)

Related to #181497846

Benchmarks also execute within the margin of error.

### Important Notes

The PR got a bit large over time as I was moving various builtin types and finding various corner cases.

Most of the changes however are rather simple c&p from Builtins.enso to the corresponding stdlib module.

Here is the list of the most crucial updates:

- `engine/runtime/src/main/java/org/enso/interpreter/runtime/builtin/Builtins.java` - the core of the changes. We no longer register individual builtin constructors and their methods by hand. Instead, the information about those is read from 2 metadata files generated by annotation processors. When the builtin method is encountered in stdlib, we do not ignore the method. Instead we lookup it up in the list of registered functions (see `getBuiltinFunction` and `IrToTruffle`)

- `engine/runtime/src/main/java/org/enso/interpreter/runtime/callable/atom/AtomConstructor.java` has now information whether it corresponds to the builtin type or not.

- `engine/runtime/src/main/scala/org/enso/compiler/codegen/RuntimeStubsGenerator.scala` - when runtime stubs generator encounters a builtin type, based on the @Builtin_Type annotation, it looks up an existing constructor for it and registers it in the provided scope, rather than creating a new one. The scope of the constructor is also changed to the one coming from stdlib, while ensuring that synthetic methods (for fields) also get assigned correctly

- `engine/runtime/src/main/scala/org/enso/compiler/codegen/IrToTruffle.scala` - when a builtin method is encountered in stdlib we don't generate a new function node for it, instead we look it up in the list of registered builtin methods. Note that Integer and Number present a bit of a challenge because they list a whole bunch of methods that don't have a corresponding method (instead delegating to small/big integer implementations).

During the translation new atom constructors get initialized but we don't want to do it for builtins which have gone through the process earlier, hence the exception

- `lib/scala/interpreter-dsl/src/main/java/org/enso/interpreter/dsl/MethodProcessor.java` - @Builtin_Method processor not only generates the actual code fpr nodes but also collects and writes the info about them (name, class, params) to a metadata file that is read during builtins initialization

- `lib/scala/interpreter-dsl/src/main/java/org/enso/interpreter/dsl/MethodProcessor.java` - @Builtin_Method processor no longer generates only (root) nodes but also collects and writes the info about them (name, class, params) to a metadata file that is read during builtins initialization

- `lib/scala/interpreter-dsl/src/main/java/org/enso/interpreter/dsl/TypeProcessor.java` - Similar to MethodProcessor but handles @Builtin_Type annotations. It doesn't, **yet**, generate any builtin objects. It also collects the names, as present in stdlib, if any, so that we can generate the names automatically (see generated `types/ConstantsGen.java`)

- `engine/runtime/src/main/java/org/enso/interpreter/node/expression/builtin` - various classes annotated with @BuiltinType to ensure that the atom constructor is always properly registered for the builitn. Note that in order to support types fields in those, annotation takes optional `params` parameter (comma separated).

- `engine/runtime/src/bench/scala/org/enso/interpreter/bench/fixtures/semantic/AtomFixtures.scala` - drop manual creation of test list which seemed to be a relict of the old design

A draft of simple changes to the compiler to expose sum type information. Doesn't break the stdlib & at the same time allows for dropdowns. This is still broken, for example it doesn't handle exporting/importing types, only ones defined in the same module as the signature. Still, seems like a step in the right direction – please provide feedback.

# Important Notes

I've decided to make the variant info part of the type, not the argument – it is a property of the type logically.

Also, I've pushed it as far as I'm comfortable – i.e. to the `SuggestionHandler` – I have no idea if this is enough to show in IDE? cc @4e6

Most of the functions in the standard library aren't gonna be invoked during particular program execution. It makes no sense to build their Truffle AST for the functions that are not executing. Let's delay the construction of the tree until a function is first executed.

[ci no changelog needed]

# Important Notes

The REPL used to use some builtin Java text representation leading to outputs like this:

```

> [1,2,3]

>>> Vector [1, 2, 3]

> 'a,b,c'.split ','

>>> Vector JavaObject[[Ljava.lang.String;@131c0b6f (java.lang.String[])]

```

This PR makes it use `to_text` (if available, otherwise falling back to regular `toString`). This way we get outputs like this:

```

> [1,2,3]

>>> [1, 2, 3]

> 'a,b,c'.split ','

>>> ['a', 'b', 'c']

```

Result of automatic formatting with `scalafmtAll` and `javafmtAll`.

Prerequisite for https://github.com/enso-org/enso/pull/3394

### Important Notes

This touches a lot of files and might conflict with existing PRs that are in progress. If that's the case, just run

`scalafmtAll` and `javafmtAll` after merge and everything should be in order since formatters should be deterministic.

Changelog:

- add: component groups to package descriptions

- add: `executionContext/getComponentGroups` method that returns component groups of libraries that are currently loaded

- doc: cleanup unimplemented undo/redo commands

- refactor: internal component groups datatype

Implements https://www.pivotaltracker.com/story/show/181805693 and finishes the basic set of features of the Aggregate component.

Still not all aggregations are supported everywhere, because for example SQLite has quite limited support for aggregations. Currently the workaround is to bring the table into memory (if possible) and perform the computation locally. Later on, we may add more complex generator features to emulate the missing aggregations with complex sub-queries.

Implements infrastructure for new aggregations in the Database. It comes with only some basic aggregations and limited error-handling. More aggregations and problem handling will be added in subsequent PRs.

# Important Notes

This introduces basic aggregations using our existing codegen and sets-up our testing infrastructure to be able to use the same aggregate tests as in-memory backend for the database backends.

Many aggregations are not yet implemented - they will be added in subsequent tasks.

There are some TODOs left - they will be addressed in the next tasks.

The mechanism follows a similar approach to what is being in functions

with default arguments.

Additionally since InstantiateAtomNode wasn't a subtype of EnsoRootNode it

couldn't be used in the application, which was the primary reason for

issue #181449213.

Alternatively InstantiateAtomNode could have been enhanced to extend

EnsoRootNode rather than RootNode to carry scope info but the former

seemed simpler.

See test cases for previously crashing and invalid cases.

- Added Minimum, Maximum, Longest. Shortest, Mode, Percentile

- Added first and last to Map

- Restructured Faker type more inline with FakerJS

- Created 2,500 row data set

- Tests for group_by

- Performance tests for group_by

Following the Slice and Array.Copy experiment, took just the Array.Copy parts out and built into the Vector class.

This gives big performance wins in common operations:

| Test | Ref | New |

| --- | --- | --- |

| New Vector | 41.5 | 41.4 |

| Append Single | 26.6 | 4.2 |

| Append Large | 26.6 | 4.2 |

| Sum | 230.1 | 99.1 |

| Drop First 20 and Sum | 343.5 | 96.9 |

| Drop Last 20 and Sum | 311.7 | 96.9 |

| Filter | 240.2 | 92.5 |

| Filter With Index | 364.9 | 237.2 |

| Partition | 772.6 | 280.4 |

| Partition With Index | 912.3 | 427.9 |

| Each | 110.2 | 113.3 |

*Benchmarks run on an AWS EC2 r5a.xlarge with 1,000,000 item count, 100 iteration size run 10 times.*

# Important Notes

Have generally tried to push the `@Tail_Call` down from the Vector class and move to calling functions on the range class.

- Expanded benchmarks on Vector

- Added `take` method to Vector

- Added `each_with_index` method to Vector

- Added `filter_with_index` method to Vector

* Implement conversions

start wip branch for conversion methods for collaborating with marcin

add conversions to MethodDispatchLibrary (wip)

start MethodDispatchLibrary implementations

conversions for atoms and functions

Implement a bunch of missing conversion lookups

final bug fixes for merged methoddispatchlibrary implementations

UnresolvedConversion.resolveFor

progress on invokeConversion

start extracting constructors (still not working)

fix a bug

add some initial conversion tests

fix a bug in qualified name resolution, test conversions accross modules

implement error reporting, discover a ton of ignored errors...

start fixing errors that we exposed in the standard library

fix remaining standard lib type errors not caused by the inability to parse type signatures for operators

TODO: fix type signatures for operators. all of them are broken

fix type signature parsing for operators

test cases for meta & polyglot

play nice with polyglot

start pretending unresolved conversions are unresolved symbols

treat UnresolvedConversons as UnresolvedSymbols in enso user land

* update RELEASES.md

* disable test error about from conversions being tail calls. (pivotal issue #181113110)

* add changelog entry

* fix OverloadsResolutionTest

* fix MethodDefinitionsTest

* fix DataflowAnalysisTest

* the field name for a from conversion must be 'that'. Fix remaining tests that aren't ExpressionUpdates vs. ExecutionUpdate behavioral changes

* fix ModuleThisToHereTest

* feat: suppress compilation errors from Builtins

* Revert "feat: suppress compilation errors from Builtins"

This reverts commit 63d069bd4f.

* fix tests

* fix: formatting

Co-authored-by: Dmitry Bushev <bushevdv@gmail.com>

Co-authored-by: Marcin Kostrzewa <marckostrzewa@gmail.com>

* Moving distinct to Map

* Mixed Type Comparable Wrapper

* Missing Bracket

Still an issue with `Integer` in the mixed vector test

* PR comments

* Use naive approach for mixed types

* Enable pending test

* Performance timing function

* Handle incomparable types cleanly

* Tidy up the time_execution function

* PR comments.

* Change log

- Add parser & handler in IDE for `executionContext/visualisationEvaluationFailed` message from Engine (fixes a developer console error "Failed to decode a notification: unknown variant `executionContext/visualisationEvaluationFailed`"). The contents of the error message will now be properly deserialized and printed to Dev Console with appropriate details.

- Fix a bug in an Enso code snippet used internally by the IDE for error visualizations preprocessing. The snippet was using not currently supported double-quote escaping in double-quote delimited strings. This lack of processing is actually a bug in the Engine, and it was reported to the Engine team, but changing the strings to single-quoted makes the snippet also more readable, so it sounds like a win anyway.

- A test is also added to the Engine CI, verifying that the snippet compiles & works correctly, to protect against similar regressions in the future.

Related: #2815

Changelog:

- feat: during the boot, prune outdated modules

from the suggestions database

- feat: when renaming the project, send updates

about changed records in the database

- refactor: remove deprecated

executionContext/expressionValuesComputed

notification

PR adds the new executionContext/expressionUpdates

API that replaces executionContext/expressionValueUpdates

notification, and in the future will be extended to support

the dataflow errors.

Changelog:

- update: execution logic to use qualified names

- update: populate runtime updates with qualified names

- update: suggestions builder to use qualified names

add: missing to_json conversions

fix: NPE in instrumentation

fix: EditFileCmd scheduling

fix: send visualization errors to the text endpoint

fix: preserve original location in the VectorLiterals pass

- fix the issue when duplicate execution jobs were never canceled.

- fix the issue in the file edit handler, when the edits can be received

in a different order.

A bunch of improvements to the suggestions

system. Suggestions are extracted to the tree data

structure. The tree allows producing better diffs

between the file versions. And better diffs reduce

the number of updates that are sent to the IDE

after a file change, and consequently fix the

issue when the runtime type got overwritten with

the compile-time type.

Add new `executionContext/executionStatus`

notification returning a list of diagnostic

messages containing localized (linked to the

location in the code) information about

compilation errors and warnings, as well as

runtime errors with stack traces.

PR implements TailCallException handler

in the IdExecutionInstrument sending

correct value updates.

- implement onReturnExceptional of the

runtime instrument

- add onExceptionalCallback to the

runtime instrument

PR introduces the MetodCallsCache created per frame execution, meaning

that it is not persisted in between the runs. The cache tracks which

calls have been fired during the program execution (and sent as a

notification to the user). When the program finishes, we compute the set

of calls that have not been executed and send them to the user as well.

- add: MethodCallsCache temporary storage tracking the executed method

calls

- add: sendMethodCallUpdates flag enabling sending all the method calls

of the frame as a value updates

In the current workflow, at first, the default Unnamed project is

created, and the Suggestions database is populated with entries from the

Unnamed.* modules. When the user changes the name of the project, we

should update all modules in the Suggestion Database with the new

project name.

This PR implements module renaming in the Suggestions database and fixes

the initialization issues.

- add: search/invalidateSuggestionsDatabase JSON-RPC command that resets

the corrupted Suggestions database

- update: SuggestionsHandler to rename the modules in the

SuggestionsDatabase when the project is renamed

- fix: MainModule initialization

1. The metadata objects weren't being duplicated when duplicating

the IR. This meant that the later passes would write metadata

multiples times into one store (reference), causing wrong

behaviour at codegen time.